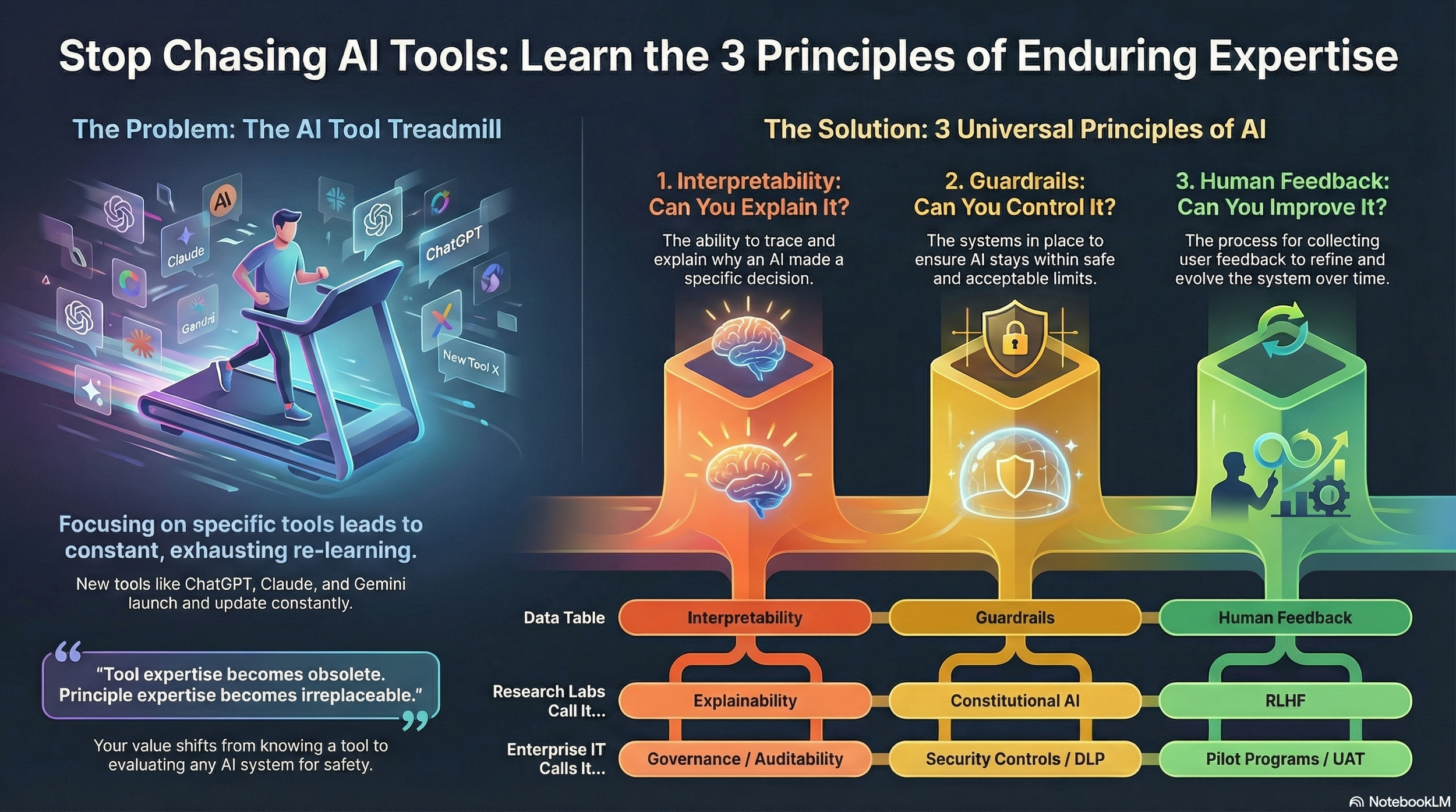

Stop Learning AI Tools. Learn These 3 Principles Instead.

You're Exhausting Yourself Learning the Wrong Thing

Everyone's learning AI wrong.

They're racing to master ChatGPT. Then Claude drops a new feature and they pivot. Then Microsoft announces Copilot updates and they scramble again. Then Google releases Gemini and the cycle repeats. Every week brings a new tool, a new interface, a new set of prompts to memorize.

They think AI expertise means knowing which buttons to push in which tools.

Here's what I've discovered after months of implementing AI systems and watching this pattern across enterprises, research labs, and startups: there are only three principles that matter across EVERY AI platform. Once you understand these, you stop drowning in tools and start seeing patterns everywhere.

You become the person who can evaluate any AI system, work with any platform, and actually future-proof your career instead of constantly re-learning surface features.

Let me show you what changed everything for me.

The Pattern I Couldn't Unsee

I noticed this while working with teams implementing different AI platforms. Some were deploying Microsoft Copilot in government agencies. Others were building with Anthropic's Claude at startups. Others were using Google's tools or AWS infrastructure.

On the surface, everything looked different—different interfaces, different vendors, different technical stacks, different vocabulary. But when I started asking deeper questions about how they were actually deploying these systems safely, the same three challenges kept appearing. Just worded differently.

The Microsoft enterprise teams talked about "governance" and "DLP policies" and "audit trails." The research teams at places like Anthropic and DeepMind talked about "interpretability" and "constitutional AI" and "RLHF." The cloud platform teams talked about "security controls" and "monitoring" and "feedback loops."

Different words. Same fundamental problems.

That's when it clicked: the entire AI field is converging on three universal principles, whether they realize it or not. And if you understand these principles, you don't need to chase every new tool that launches. You can evaluate anything.

The Three Principles That Actually Matter

Forget the tool-specific tutorials. Here are the only three things you need to understand about any AI system:

1. Interpretability: Can You Explain It?

The universal question: Why did the AI do that?

This shows up everywhere, just with different vocabulary:

Research labs call it "interpretability" or "explainability"

Enterprise IT calls it "governance" or "auditability"

Compliance teams call it "transparency"

DevOps calls it "observability"

But they're all asking the same thing: Can you trace AI behavior back to its source? Can you predict what it will do? Can you explain decisions to stakeholders?

If you can't answer these questions, you have an interpretability problem—regardless of which AI tool you're using.

2. Guardrails: Can You Control It?

The universal question: What can this AI NOT do?

Again, different communities use different terms:

Research teams talk about "safety layers" and "constitutional AI"

Enterprise teams talk about "security controls" and "access policies"

Developers talk about "constraints" and "validation"

IT teams talk about "DLP" and "permission boundaries"

But everyone's solving the same challenge: How do you ensure AI stays within acceptable limits? What happens when it encounters something outside its scope?

Every responsible AI deployment—from Microsoft to Anthropic's Claude to Google Gemini—implements guardrails. The specific mechanisms differ, but the principle is universal.

3. Human Feedback: Can You Improve It?

The universal question: How do you know if this is actually working?

Same pattern, different vocabulary:

Research labs call it "RLHF" (Reinforcement Learning from Human Feedback)

Product teams call it "user testing" and "iteration"

Enterprise teams call it "pilot programs" and "UAT"

Agile teams call it "continuous improvement"

The core principle: AI systems must evolve based on how humans actually use them and what they actually need.

Whether you're fine-tuning a model or adjusting an enterprise deployment, you need systematic ways to collect feedback and make improvements.

The Diagnostic You Can Run Tomorrow

Before you watch another tutorial or read another article about AI trends, run this 3-question diagnostic on any AI system you're working with:

Question 1: Can you explain it? (Interpretability)

If someone asks "why did the AI do that?", can you trace it back?

Do you have logs, audit trails, ways to understand behavior?

Can you predict how it will handle novel situations?

Question 2: Can you control it? (Guardrails)

What boundaries exist? What can the AI NOT do?

What happens when it encounters something outside its scope?

Who can access what, and how is that enforced?

Question 3: Can you improve it? (Human Feedback)

How do you collect feedback on what's working and what's not?

Can you adjust the system based on real-world usage?

Do you have a process for iteration and refinement?

This diagnostic works for ChatGPT, Claude, Microsoft Copilot, Google Gemini, or any custom AI solution. The principles don't change.

Find your gaps. That's where your risk lives—and where your value opportunity is.

Why This Changes Everything for Your Career

Here's the part that matters for you personally:

Tool expertise becomes obsolete. New AI platforms launch constantly. If your value is "I know how to use ChatGPT," you're on a treadmill, constantly re-learning. That's exhausting and unsustainable.

Principle expertise becomes irreplaceable. If your value is "I can evaluate any AI system for safety, explain it to stakeholders, and identify gaps before they become problems," you're valuable regardless of which vendor wins.

This isn't about being anti-tools. It's about understanding what creates lasting value in your career.

When your company inevitably switches AI platforms—and they will—you want to be the person who can evaluate whether the new system is actually better. You want to speak the language that transcends vendors.

What You're Really Seeing Across the Industry

The convergence I'm describing isn't just theory. Look at what's actually happening:

Microsoft builds Purview for data governance, implements security controls, runs pilot programs before enterprise rollout. That's interpretability, guardrails, and human feedback.

Anthropic develops Constitutional AI for safety, builds interpretability research into Claude, uses RLHF for alignment. Same three principles, research implementation.

Google, AWS, OpenAI—pick any serious AI deployment. You'll find these same three patterns. The vocabulary changes. The underlying challenges remain constant.

Why does this matter? Because it means you're not learning "how Anthropic does it" or "how Microsoft does it." You're learning how responsible AI deployment works, period.

That knowledge transfers. That knowledge compounds. That knowledge makes you more valuable over time instead of less valuable.

The Vocabulary Trap (And How to Escape It)

Here's the simple hack: whenever someone uses a fancy term you don't recognize, just ask yourself which of the three principles they're really talking about.

Someone mentions "explainability research"? Interpretability. Someone talks about "permission boundaries"? Guardrails. Someone describes "user acceptance testing"? Human feedback.

It's almost always one of the three. Once you see the pattern, you can't unsee it.

This cuts through the confusion. You stop feeling overwhelmed by different terms and start recognizing the same fundamental concepts showing up everywhere.

What to Do Monday Morning

Don't just read this and move on. Actually apply it:

1. Pick one AI system you're using, evaluating, or responsible for at work.

2. Run the 3-question diagnostic (takes 5 minutes):

Can you explain it?

Can you control it?

Can you improve it?

3. Identify your biggest gap. Which principle is weakest in your implementation?

4. Ask one powerful question:

Interpretability weak? Ask: "How would we audit this system's decisions if asked?"

Guardrails weak? Ask: "What would prevent this from doing X harmful thing?"

Feedback weak? Ask: "How do we actually know if this is working as intended?"

You don't need to solve everything today. You just need to start asking the right questions.

These questions make you more valuable in every conversation about AI deployment. They separate the people who understand AI from the people who just know how to use the current popular tool.

The Real Shift

We're watching the AI field mature in real-time. The wild west phase of "let's see what AI can do" is giving way to a more disciplined era of "how do we ensure AI does what we intend, safely and reliably?"

This maturation is happening across research labs, enterprise deployments, startups, and government agencies. Everyone's arriving at the same fundamental principles, just from different angles.

You can either keep chasing the latest tool release, exhausting yourself trying to stay current with every new feature.

Or you can learn the principles that make you valuable regardless of which tools exist tomorrow.

The choice is yours. But I know which approach actually future-proofs a career.

Your Turn

I want to know what you discover:

Run the 3-question diagnostic on an AI system you're working with. Which gap did you find? Interpretability? Guardrails? Feedback? Where are you strongest?

What surprised you? Did you realize you had better guardrails than you thought? Did you discover a massive interpretability gap you'd been ignoring?

Drop your findings in the comments. The more we share what we're learning, the better our collective understanding becomes. And honestly? I'm still learning this stuff too. Every conversation reveals new patterns.

Stop learning tools. Start recognizing principles. Everything else gets easier.

Jeremy Mckellar is a Connector, Creative, and Tech Futurist focused on making technology meaningful and accessible. Connect with him on LinkedIn or follow his thoughts on technology at JeremyMckellar.com.

AI Collaboration Disclosure: This article was developed in collaboration with AI as a thinking partner to help crystallize and organize the patterns I've been noticing across different AI implementations. I believe AI tools can amplify our insights when used thoughtfully—consider exploring how these tools might enhance your own strategic thinking.